Lori Niles-Hofmann showed a slide at Learning Technologies last week that I haven’t been able to stop thinking about.

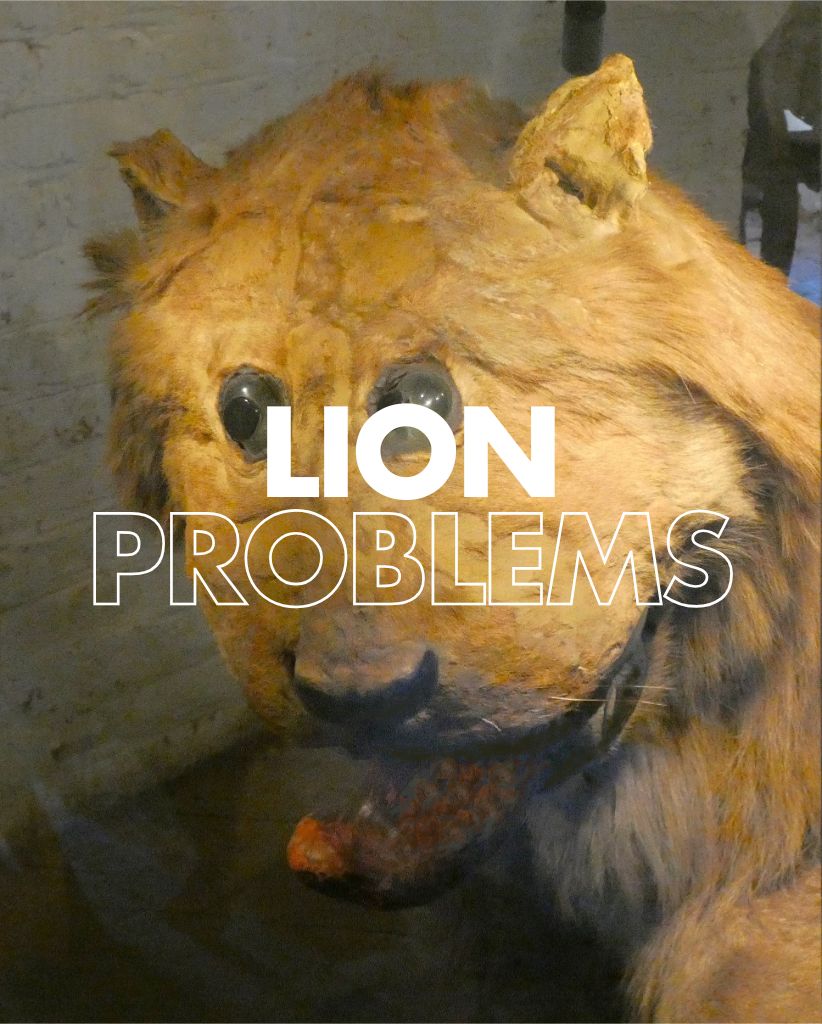

The lion of Gripsholm was preserved by a Swedish taxidermist in 1783. He’d never seen a lion so worked from a flag. The result looks like a lion; it has fur, four legs, and a mane. But something is deeply wrong with it. The eyes, the tongue and the uncanny wrongness you can’t quite name but can’t ignore.

That’s what a lot of AI-generated learning content feels like right now.

I’m not seeing it in organisations yet – most aren’t there. But I’m seeing it in vendor pitches and on LinkedIn every day. Content that has the right shape, right structure, and right vocabulary. But something fundamentally off about it.

It’s not that it’s wrong, but it isn’t quite understood. It was reconstructed from a pattern and not from knowledge. The taxidermist wasn’t incompetent – he just didn’t know what a lion actually was.

The market is in a similar place – it rewards the shape of learning. Modules, objectives, scenarios, and knowledge checks. If it looks right in a demo, it sells. AI makes it faster and cheaper to produce things that look right and that’s not a vendor problem. That’s a procurement problem.

We’re buying lions from people who may only have ever seen flags.

As I say regularly – nuance can’t be automated (I even have badges which state that now). Judgement about what a specific learner in a specific context actually needs is highly unlikely to be generated solely from a prompt. The human in the loop isn’t a compliance step but the thing that makes it learning rather than content.

The lion of Gripsholm is in your inbox and probably in your LinkedIn feed too. The question is whether you can tell the difference.

[…] the second of three posts about last week’s Learning Technologies Exhibition and Conference and it’s a reflection […]

LikeLike